The top 10 Most-cited Papers in Music Technology

What is the most-cited paper in music technology? A few weeks ago I informally posed this question in social media (Twitter, Facebook). A nice conversation followed up the question, which I will summarize here.

Books vs papers

The question focuses on articles, as opposed to books. The rationale is to identify the most influencial articles in music technology, which tend to be faster in publishing and are more focused on releasing discoveries and revealing new approaches, if compared to books. Top-cited books that were suggested but are excluded from this group are:

- Helmholtz (1877): On the Sensations of Tone as a Physiological Basis for the Theory of Music (1651 citations).

- Roads (1996): The Computer Music Tutorial (1543 citations).

- Gold, Morgan and Ellis (1999): Speech and audio signal processing: processing and perception of speech and music (892 citations).

What is music tech?

Music technology is a niche and novel field, if we compare it to math or physics. Here, the term music technology is used interchangeably with sound and music computing. This discipline looks into both music and sound from an interdisciplinary standpoint borrowing methods and techniques from science, engineering, social sciences and the arts. It aims at understanding, modeling and generating sound and music using computation, as well as suggesting future uses of technologies for sound and music.

How to retrieve the most-cited papers?

The most efficient way of finding the top-cited papers in music tech was to informally ask to the music tech community expertise using social media, which helped to define the problem and retrieve well-known papers from different areas. I also tried to apply techniques suggested by this generic post in Quora on How do you find out the most cited paper in a research field?, which shed light on looking at prominent profiles in the field and see their most cited publications (as opposed to find reviews written by them suggested by the above post).

The top-10 list

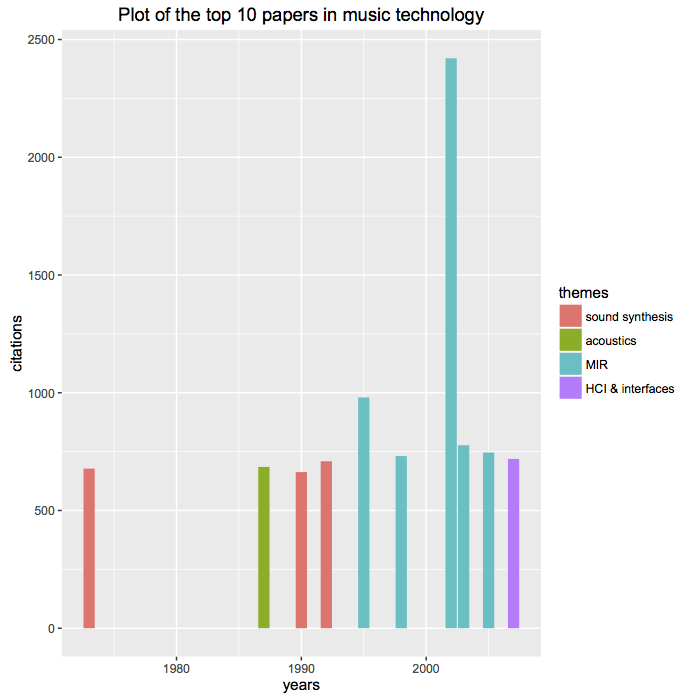

The final top 10 list that emerged from these conversations is:

- Tzanetakis & Cook (2002): Musical genre classification of audio signals (2420 citations).

- Ghias, Logan, Chamberlin, Smith (1995): Query by humming: musical information retrieval in an audio database (980 citations).

- Smaragdis & Brown (2003): Non-negative matrix factorization for polyphonic music transcription (777 citations).

- Bello, Daudet, Abdallah, Duxbury, Davies and Sandler (2005): A tutorial on onset detection in music signals (746 citations).

- Smaragdis (1998): Blind separation of convolved mixtures in the frequency domain (731 citations).

- Jordà, Geiger, Alonso and Kaltenbrunner (2007): The reacTable: exploring the synergy between live music performance and tabletop tangible interfaces (719 citations).

- Smith (1992): Physical modeling using digital waveguides (709 citations).

- Smith & Abel (1987): Closed-form least-squares source location estimation from range-difference measurements (685 citations).

- Chowning (1973): The synthesis of complex audio spectra by means of frequency modulation (678 citations).

- Serra & Smith (1990): Spectral modeling synthesis: A sound analysis/synthesis system based on a deterministic plus stochastic decomposition (663 citations).

Observations

The topics of the papers include sound synthesis (physical modeling synthesis, frequency modulation synthesis, spectral modeling synthesis); acoustics (source localization); music information retrieval (e.g. automatic musical genre classification, polyphonic music transcription, onset detection, source separation); HCI and new interfaces. In general, the titles of the publications are accurate, novel, and with a trade-off between generic and specific.

Eight out of the 10 publications come from USA, and the other two from Europe. The first publication dates back to 1973 and the latest has been published in 2007. In general, publications are within the range of 1990–2007s. Thus obviously a few years are needed to reach a fair amount of citations, potentially at least around a decade. With a few exceptions (Tzanetakis & Cook), a well-cited paper in the field seems to be within the range of 650–1K citations.

Most papers are journal articles (e.g. Tzanetakis & Cook, Bello et al., Smaragdis, Smith, Smith & Abel, Chowning, Serra & Smith) and conference papers (Ghias et al., Jordà et al.), yet one of them is a workshop paper (Smaragdis & Brown). This indicates that a timely and novel topic can be more important than the publishing venue, yet journal articles seem to be the most cited.

Two authors have more than one paper: Smith (3 papers), who specializes in audio signal processing; and Smaragdis (2 papers), who specializes in audio signal processing, machine learning and machine listening. Seven out of 10 are written in collaboration. Notoriously, there are no female authors in this list. The main reasons seem to be related to the fact that this field has been (and still is) mostly male dominated. We can certainly do (and will do) better!

Acknowledgements: Thanks to Gerard Roma, Alexander Lerch, Dan Stowell, Justin Salamon, Lauren Hayes, Liz Dobson, Emilia Gómez, and Andrea Franceschini for their contributions to this conversation.

Note: The number of citations has been retrieved on November 12, 2016, from Google Scholar.